Search visibility no longer involves ranking pages but being chosen as a source within the AI answers, and lots of brands are struggling to comprehend why their content is not cited even when it ranks well. Traditional SEO metrics like keywords and backlinks are still important, but no longer provide a guarantee of being listed in AI-generated responses.

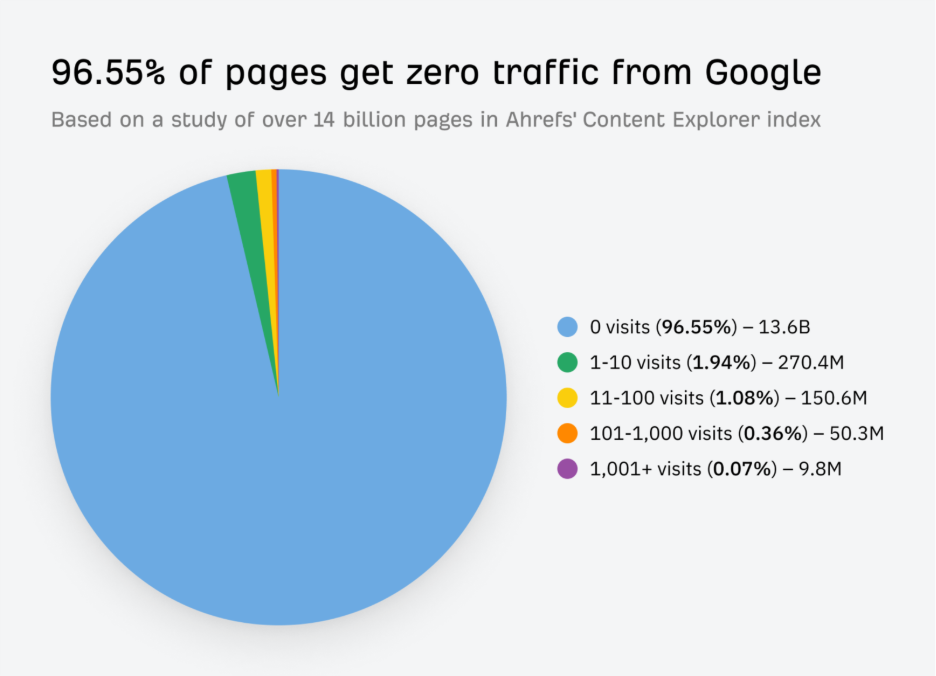

According to Ahrefs, 96.55% of pages receive no organic traffic, which demonstrates that it is already hard to achieve visibility even before AI filtering is applied. Now, large language models use additional layers of trust before deciding on sources to utilize.

This creates a new challenge where content must be well-structured, demonstrate clear expertise, and be consistently referenced across multiple credible sources. The key is to comprehend the AI Trust Stack, which is a layered model that describes how LLMs build credibility with the help of signals like topical authority, entity recognition, citations, and contextual links.

This blog breaks down each of the layers and demonstrates how to match your strategy to modern AI evaluation and generative AI evaluation needs.

What Is the AI Trust Stack?

The AI Trust Stack is a layered framework that describes the way large language models make decisions about the reliability of a source to rely on in generated answers. Rather than using only keywords or link metrics, LLMs use probabilistic retrieval, semantic comprehension, and cross-source validation to evaluate credibility.

Each layer adds a certain trust signal, starting with crawlable, well-structured content that can be fragmented into meaningful parts. Topical depth then reveals whether a brand consistently covers a subject, whereas entity recognition assists the model in recognizing that brand as a particular concept.

Repeated mentions in multiple sources have a high probability of citation, while contextual backlinks reinforce those mentions by validating the brand’s expertise within relevant discussions. All these layers combine to form a multi-signal system where inclusion depends on consistency, which has a direct effect on AI evaluation and strengthens generative AI evaluation.

Breaking Down the AI Trust Stack Layers

In order to gain insight into how this multi-signal system is implemented in reality, a closer look at each of the layers is necessary. Each layer adds a sort of trust signal, and they all collectively decide which content can be included in AI-generated responses.

Layer 1: Crawlable and Structured Content

To evaluate credibility, the content should be read on a structural level in the case of LLMs. Clean HTML, a clear heading hierarchy, and semantic formatting enable models to subdivide pages into parts that are accessible when generating responses.

When presenting information in a logical order, the system is able to find definitions, steps, and key points without using assumptions, which makes it more likely that relevant passages will be chosen as supporting evidence.

Schema markup provides contextualization of entities, authors, and content types, and FAQ blocks and ordered lists aid in mapping connections between concepts. On the contrary, cluttered layouts decrease the accuracy of retrieval and weaken trust cues.

Even though this layer does not establish authority, it defines eligibility for inclusion and serves as a gateway to AI evaluation.

Layer 2: Topical Authority Over Keyword Targeting

Topical authority has now surpassed the individual page relevance of keywords since LLMs assess subject coverage rather than single-page relevance. As a site publishes interconnected content around a defined theme, it forms a semantic network that is indicative of expertise.

It also has internal links of the hub page to supporting articles that help models identify depth and continuity, which enhances retrieval consistency.

Semrush found that on average, search results lead users to visit 3-3.5 pages under each instance of landing on a site, which highlights the importance of comprehensive content coverage. Websites that contain fragmented or disconnected content do not attract users or are less likely to be accepted as an authoritative source of information about a topic.

Conversely, when coverage is made on several pages, there are higher chances that several passages of a given domain will be retrieved, which supports credibility. This layer enhances generative AI evaluation by providing a better alignment of the context.

Layer 3: Brand as an Entity, Not Just a Website

LLMs interpret brands as entities rather than isolated pages, so trust depends on how clearly a brand is defined across the web. Consistent naming, detailed descriptions of sections, author profiles, and structured organization data help models identify a brand as a distinct concept. When the same entity appears in authoritative contexts, stronger associations form between the brand and its core topics.

Contextual signals are also required for entity clarity. Mentions that include roles, expertise, and industry relevance assist models in plotting relationships within their knowledge graph.

In the absence of this context, a brand can be considered as a generic reference and not a specialized source. This layer allows AI evaluation, which enables proper entity recognition and strengthens generative AI evaluation through consistent source attribution.

Layer 4: Citation Frequency Across the Web

The constant mentions in independent domains are a powerful sign of trust since they demonstrate that the brand is recognized by several different sources within a specific context.

LLMs analyze these repetitive patterns to identify a difference between information that is widely recognized and that which is self-published. Listicles, comparison pages, reviews, and editorial references are all factors that increase citation frequency.

Backlinko states that 94% of blog posts lack any type of external links, which creates an understanding of how rare third-party validation actually is and the importance of recurring mentions in credibility evaluation.

When a brand is presented in many independent sources, it is an indication of cross-domain recognition and not an individual promotion. Such an expanded presence enhances the chances that models will experience repetitive references during synthesis.

Consequently, citation frequency reinforces AI evaluation as well as showing corroboration between sources and enhances generative AI evaluation by reducing uncertainty about source choices.

Layer 5: Backlinks as External Validation

Backlinks continue to be a factor of trust, yet the role has changed to contextual confirmation as opposed to pure ranking impact. Instead of using domain metrics, LLMs use link relevance, editorial positioning, and adjacent content.

Semantic confirmation is found in links in the articles that discuss similar topics, whereas generic placements have minimal credibility. Likewise, being mentioned with the brands that people rely on boosts credibility since it places the source in an established and authoritative knowledge network.

When a page is cited with credible parties, the model understands that this is mutual credibility. Backlinks serve as an external layer of endorsement within a trust stack, helping facilitate AI evaluation and increasing the likelihood that content is considered trustworthy during the generation of answers.

Freshness vs Authority in LLM Trust

LLMs balance the historical authority and up-to-date information to make sure that answers are correct and relevant. Even the highly authoritative sources can be deprioritized when the information they contain is out of date, particularly in the rapidly evolving industries.

Frequent updates indicate that a source is up to date and is not based on static content. But freshness is not enough to build trust.

New content without supporting authoritative information is less likely to be chosen. The best way is to combine both timeless resources and updates that will include any new information, examples, or trends.

This balance affects AI evaluation since models consider both reliability and recency when choosing passages. It also enhances generative AI evaluation, making sure that the responses are based on current facts and are factual and consistent with the established sources.

Established Expertise with Attributed Content

Credibility is still based on experience, expertise, authoritativeness, and trustworthiness, yet they have gained an extended role in AI-driven contexts. Authentic author profiles, published case studies, and clear organizational data provide some tangible evidence of practical knowledge.

These factors assist models in differentiating between the purely theoretical information and the insights based on practical experience. According to Semrush, the perceived trust is more likely to develop on content where the author and bio are clearly outlined, which is consistent with the way that LLMs credit information to familiar and responsible sources.

By having authors consistently linked to certain subjects, their content is more likely to be brought up when related queries are used. This layer enhances the evaluation of AI as it connects expertise to individuals and organizations. It further improves generative AI evaluation since attributed content enables the model to be consistent in synthesizing information across multiple passages.

How to Optimize for the AI Trust Stack

To optimize the AI Trust Stack, the selection of signals that include technical, content, and authority needs to be organized. The initial observation is to make sure that pages are well formatted with semantic headings, schema, and logical flow.

Then, the creation of topical clusters determines the depth of the subject and supports the internal relations of articles. Entity signals are then to be reinforced by ongoing branding, bio pages, and coverage by reputable agencies.

The next step is citation building, which aims at placing citations within relevant discussions, as opposed to single placements. Lastly, editorial backlinks can be acquired to give external legitimacy that proves expertise.

This process aids AI evaluation through the alignment of many layers of trust, instead of using one measure. It also enhances generative AI evaluation due to its consistent signals throughout the structure, content, and external recognition, which leads to retrieval confidence.

Common Mistakes That Reduce AI Trust

There are some patterns that undermine trust indicators despite well-written content.

- Thin publishing models where volume is more important than depth do not build topical authority.

- Lack of cohesive branding on platforms does not allow the recognition of the entity.

- Extra-optimized anchor texts and irrelevant positioning of the links make contextual credibility less significant.

- Absence of external mentions is another frequent problem because it restricts the frequency of citation and the probability of retrieval.

- Semantic understanding is also weak in isolated blog posts that lack any internal connections.

These concerns are critical in proper AI evaluation because models rely on consistent and corroborated signals. It is also crucial to generative AI evaluation, in which fragmented or unsupported content is less likely to be cited in generated responses.

From Visibility to Verifiable Trust

The shift from search rankings to AI-based answers has altered the concept of credibility. Rather than concentrating on the individual metrics, the brands are required to align the structural clarity, topical depth, entity recognition, citations, and contextual links into a coherent trust model.

Each layer reinforces the next, forming a similar signal that can be validated by LLM across several sources. Knowing the way these systems conduct AI evaluation and generative AI evaluation, organizations can shift from attempting to rank pages to becoming known knowledge entities.

Such a shift needs the coordinated effort of both content and technical optimization and external validation, yet results in more sustainable visibility in AI-based search frameworks where trust determines selection.

About the author: Vibhav Gaur, Business Head

Vibhav Gaur leads strategic operations and business growth at the organization. With a strong background in digital transformation and customer-focused solutions, he has helped numerous clients streamline their web presence and scale efficiently. His leadership ensures seamless execution across teams, with a commitment to delivering results and fostering innovation in every project.